Hierarchical Planning with Latent World Models

- Hierarchical MPC in a shared latent space, enabling direct subgoal transfer across levels without policies, skills, or task-specific rewards

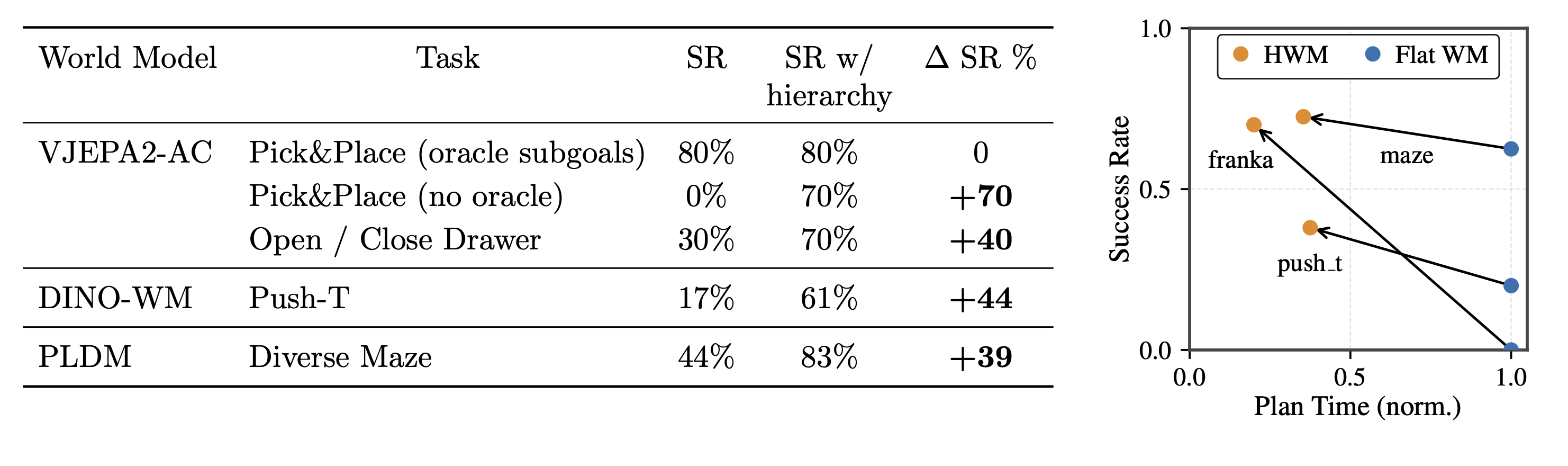

- Unlocks zero-shot non-greedy planning from images, achieving 70% success on real-robot pick-and-place from a single goal image (vs. 0% for flat VJEPA2-AC planner)

- Model-agnostic planning abstraction, consistently improving diverse latent world models (VJEPA2-AC, DINO-WM, PLDM)

- Better performance and efficiency on long-horizon tasks, with up to +44% absolute success rate gains and up to 4x lower planning cost

Abstract

Planning with learned world models has emerged as a promising paradigm for zero-shot embodied control, but even strong flat planners such as VJEPA2-AC fail on long-horizon, non-greedy tasks due to compounding prediction error and exponentially expanding search. Hierarchy is the natural remedy, but existing approaches are limited in complementary ways: hierarchical RL amortizes control into task-specific policies, while classical hierarchical model predictive control (MPC) has been restricted to low-dimensional state or known dynamics. We present Hierarchical Planning with Latent World Models (HWM), the first demonstration that hierarchical planning can be applied directly to world models trained solely by next-latent prediction, enabling zero-shot control from pixels with hierarchical decomposition realized entirely at inference time. The formulation trains latent world models at different temporal scales within a shared latent space, so predictions from a coarser model act directly as subgoals for finer-scale MPC via latent matching, together with a learned action encoder that compresses primitive-action chunks into latent macro-actions to keep long-horizon search tractable. On real-world Franka manipulation, HWM solves pick-and-place from a single goal image with 70% success, where the flat VJEPA2-AC planner achieves 0%, and outperforms strong vision-language-action baselines trained on about 77x more robotic data. Across simulated push manipulation and maze navigation, HWM consistently improves success while requiring up to 3x less planning compute.

Hierarchical Planning

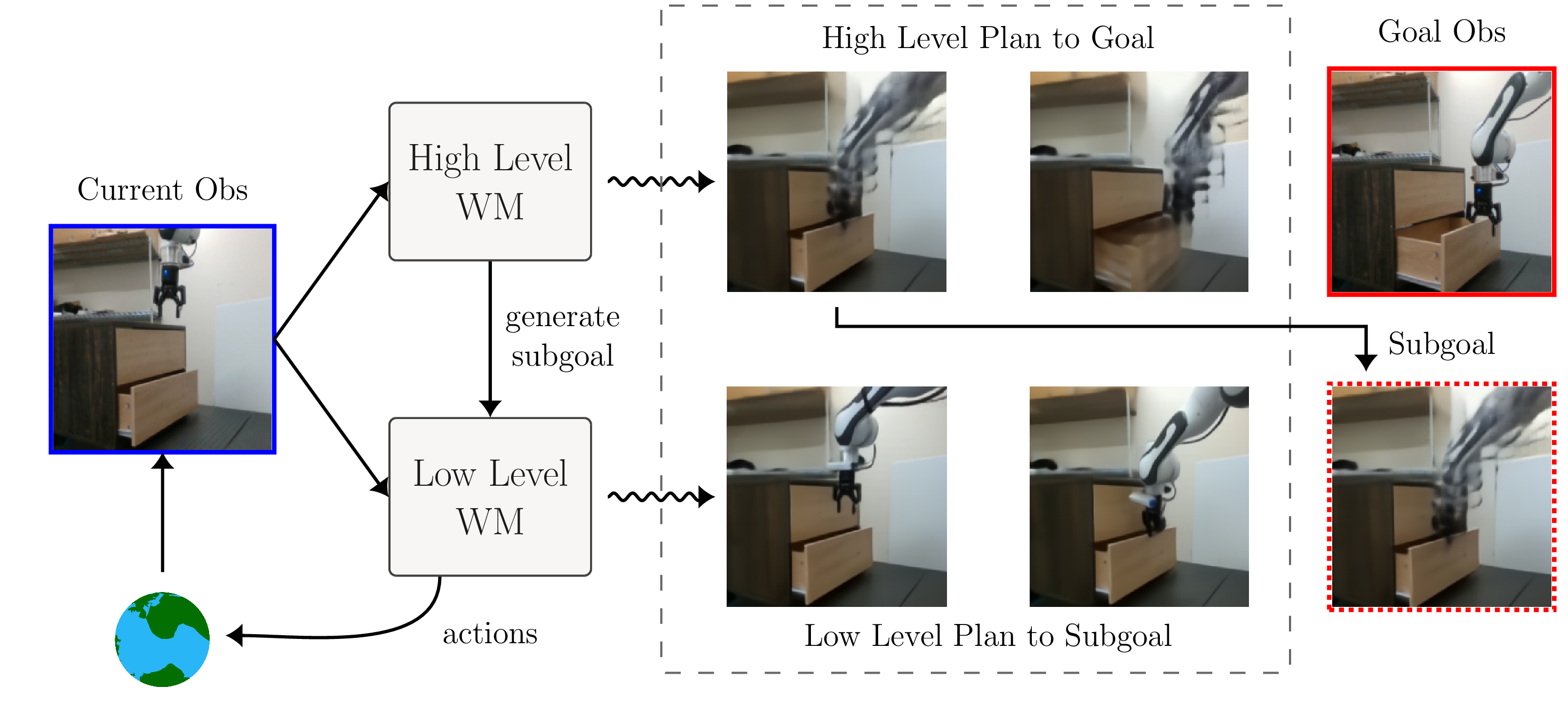

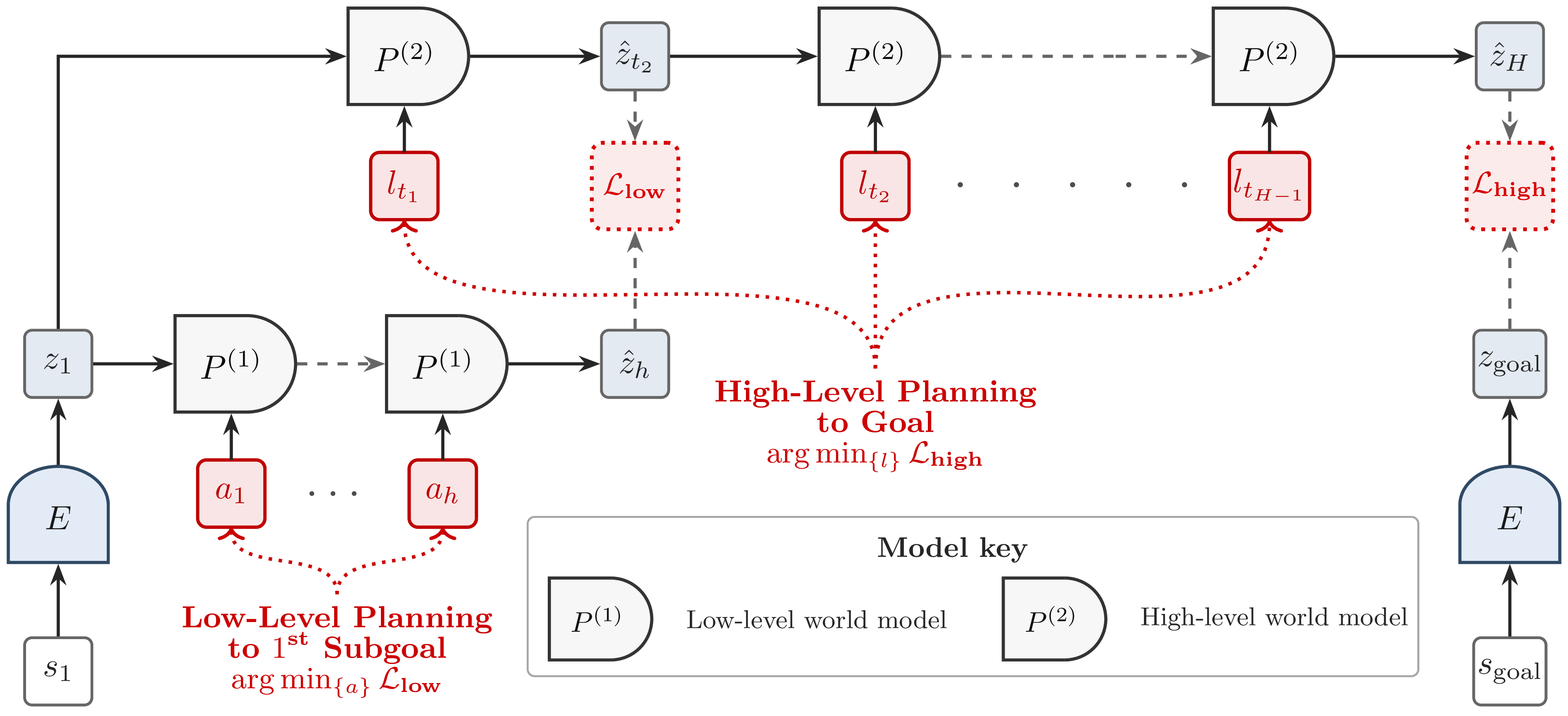

A high-level planner optimizes macro actions using a long-horizon latent world model to reach the final goal embedding. The resulting first predicted latent state serves as a subgoal for low-level planning, where a short-horizon world model optimizes primitive actions to reach this subgoal.

Training

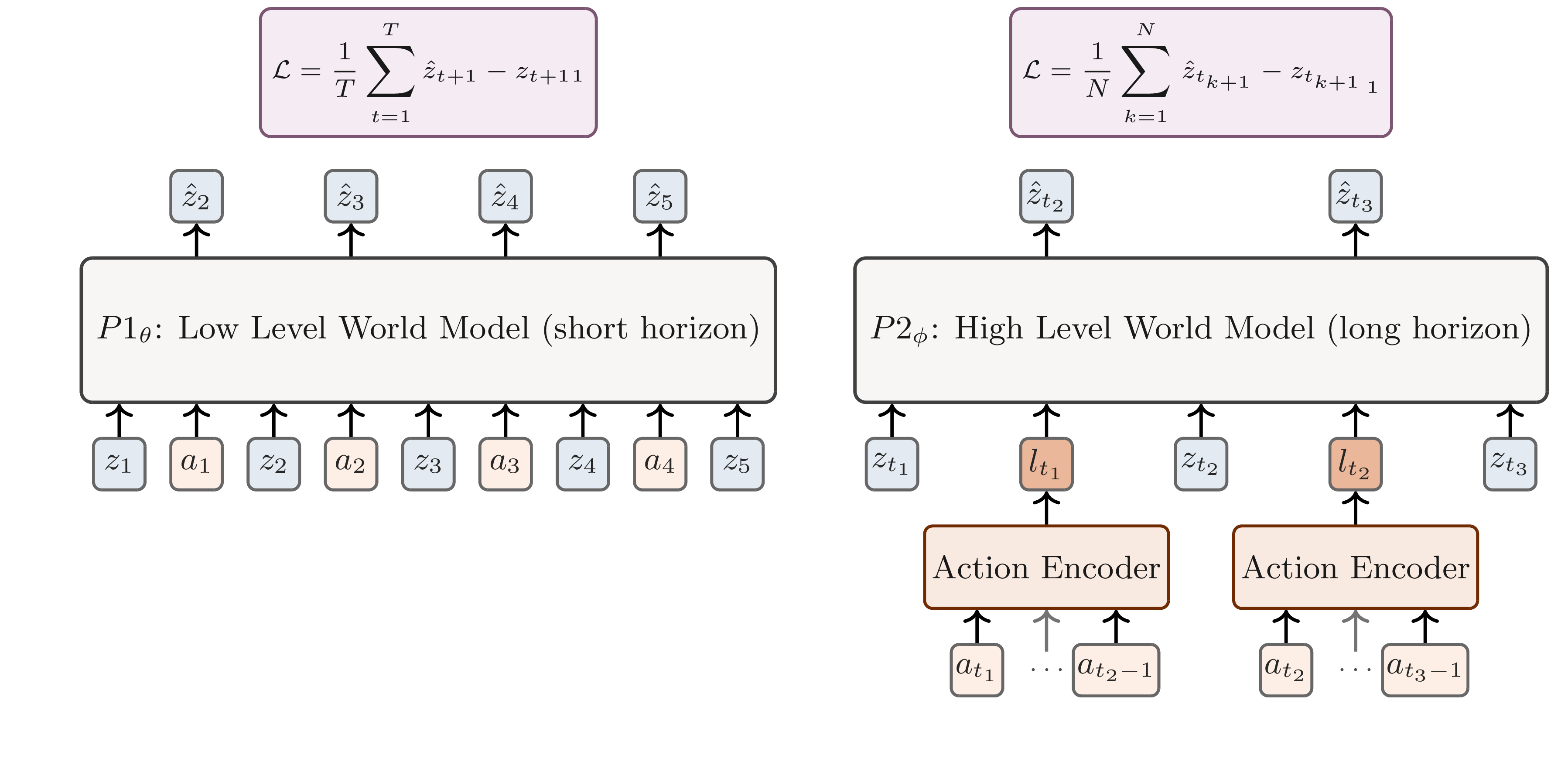

Training latent world models at multiple timescales. We train two predictors that operate in a shared latent space but at different temporal resolutions. The low-level predictor models short-horizon dynamics conditioned on latent states and primitive actions, producing step-by-step predictions of future latents. The high-level predictor models long-horizon dynamics via latent macro-actions, which are obtained by encoding sequences of low-level actions via an action encoder. Both predictors operate causally and are trained with an $\ell_1$ latent prediction loss.

Main Results

Hierarchical Planning with VJEPA2-AC: Pick-&-Place w/ Franka Arm

Zero-shot robotic manipulation with Franka dual-gripper, including pick-and-place and drawer manipulation. Single-level planner (VJEPA2-AC) succeeds only when the task is explicitly decomposed into greedy subtasks via manually provided subgoals. In contrast, hierarchical planning enables end-to-end task execution using only a final goal image.

Hierarchical Planning with DINO-WM: Push T

Left: Push-T Performance Across Task Horizons. In each trial, a random start-goal pair d. Right: Hierarchical planning achieves higher success while using up to 3x less test-time compute than flat planners. timesteps apart is sampled from a validation trajectory.

Hierarchical Planning with PLDM: Maze Navigation

Left: Diverse Maze Performance Across Task Horizons. Agents are evaluated zero-shot on navigation tasks in test maps with layouts unseen during training. Start and goal positions are randomly sampled at varying grid distances. Right: Hierarchical planning achieves higher success while using up to 3x less test-time compute than flat planners.